Big Data Characteristics

Big data is about extremely large and complex datasets containing a high volume, velocity, and variety of data that traditional computing systems struggle to manage and analyze. Big data requires new and innovative technologies and analytical methods to transform these vast volumes of data into meaningful value and actionable insights.

This article provides an overview of the main concepts that define and characterize big data. Understanding these fundamental principles is key to leverage big data effectively.

Characteristics of Big Data

Big data is a hot topic in data management due to its potential for driving transformative change across industries through data-driven decision making. But what exactly constitutes big data? Here are the key attributes that set big data apart:

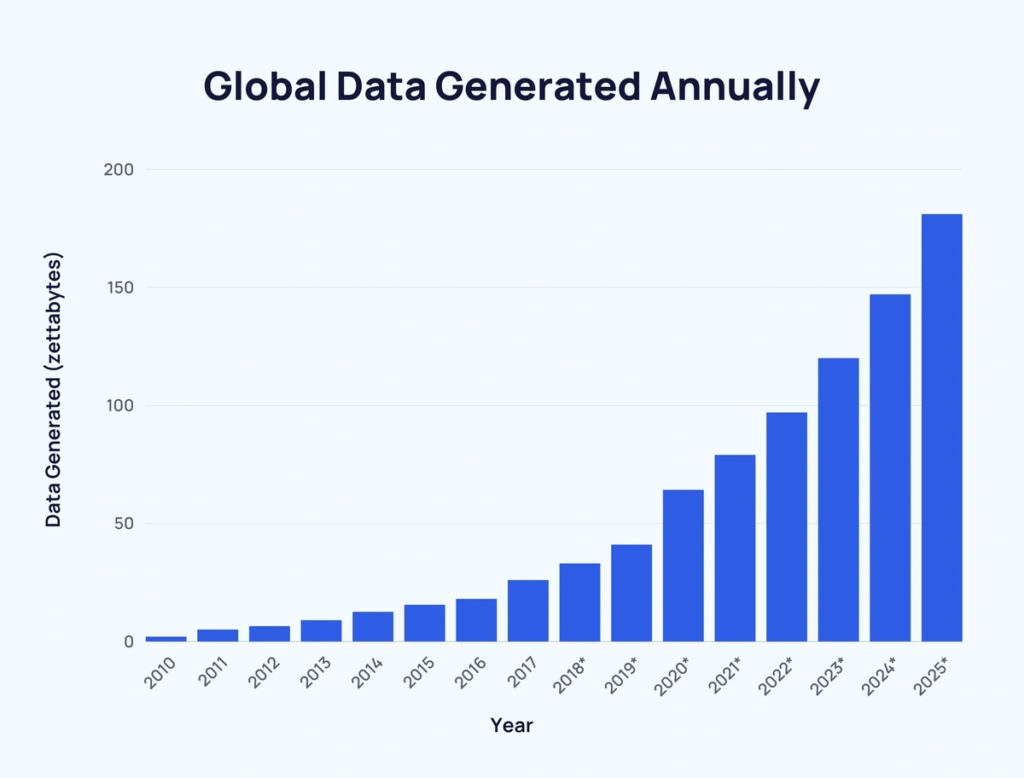

Volume

The volume or quantity of data being generated and stored has exploded exponentially. 90% of the world’s data has been created in just the last 2 years.

Volume measures the enormous amounts of data involved from terabytes to petabytes to zettabytes. Social media posts, digital pictures, videos, purchase transaction records, cellphone GPS signals and more result in endless volumes of data.

Source: ExplodingTopics

Velocity

Velocity means the speed at which new data is being generated and the pace at which data moves from one point to the next. RFID tags, sensors, smart meters and other monitoring devices are driving the need for real-time or near real-time processing. The flow of data is massive and continuous.

Variety

Variety means the diversity of data types and sources. Structured traditional databases represent only a fraction of big data. It also comprises unstructured data from text documents, email, video, audio, financial transactions, and social media. This variety of formats presents new data integration and analytics challenges.

Veracity

Veracity refers to the noise, bias and abnormalities inherent in large datasets impacting the accuracy and integrity of the data. With big data, quality and truthfulness cannot be taken for granted due to inaccuracies and inconsistencies.

Value

Value represents the process of extracting meaningful insights from the deluge of big data and harnessing it for competitive advantage. The purpose of big data analytics is ultimately to create value.

Sources of Big Data

Big data streams in from a myriad of sources, both inside and outside an organization. The major sources contributing to the big data phenomenon include:

- Machine Data: The IT infrastructure including servers, sensors, logs, networks, clickstreams, and application performance data produces immense volumes of machine-generated data.

- Social Data: Social networking platforms like Facebook, Twitter, Instagram, and LinkedIn are driving a data explosion through posts, photos, videos, and interactions.

- Transaction Data: Point of sale devices, ecommerce applications, ERP systems record and generate transactional interactions with details on every exchange.

- Sensor Data: Sensors embedded in devices and equipment produce telemetry data tracking usage patterns, performance, temperature, motion, and other metrics.

- Public Data: Open data released by governments as well as crowdsourced data from citizen scientists and enthusiasts add to the data deluge.

- Commercial Data: Vendors sell data on demographics, psyhographics, attitudes, and behaviors collected from surveys, warranty cards, subscriptions, and more.

Big Data Technology Stack

A robust technology ecosystem enables organizations to effectively and efficiently harness value from big data. This stack powers the full big data pipeline – from data ingestion to storage, processing, analysis and visualization.

Ingestion and Collection Tools

Specialized tools like Flume, Kafka, Scribe, Logstash, Chukwa, and Beats ingest and collect streaming data from diverse sources into unified data lakes and warehouses. They handle real-time data feeds as well as historic data loads with reliability and scalability.

Distributed File Systems

Optimized distributed file systems like HDFS, MapR and Ceph provide the foundation for scalable, reliable and cost-effective storage for massive datasets. They shard and replicate files across commodity servers and handle hardware failures.

Databases

SQL and NoSQL databases store and organize big data for further processing. NoSQL databases like HBase, Cassandra, and MongoDB flexibly manage unstructured and semi-structured data. SQL-on-Hadoop technologies like Hive, Spark SQL, Presto and Impala enable SQL queries. Timeseries databases like InfluxDB optimize analytics for time-stamped data.

Cluster Resource Managers

Solutions like YARN, Mesos, and Kubernetes abstract the distributed system resources and enable multiple applications to share a cluster by dynamically allocating resources like CPU, memory, disk and network.

Stream Processing Engines

Distributed stream processing engines like Storm, Spark Streaming, Flink, Kafka Streams, and Apex handle continuous flows of real-time data with low latency. They provide scalable and fault-tolerant data pipelines.

Analytics Tools

Powerful analytics tools unlock insights. SQL engines provide SQL querying. Machine learning libraries like TensorFlow, PyTorch, scikit-learn in Python, Caret in R enable predictive analytics. Apache Zeppelin and Jupyter Notebook support big data exploration.

Data Visualization

Platforms like Tableau, Qlik, Power BI and open source tools like Apache Superset provide interactive dashboards to visualize trends, outliers and patterns across massive, multivariate datasets using smart charts, graphs, and maps.

This robust open source and commercial ecosystem empowers organizations to build comprehensive big data pipelines that deliver value.

Big Data Analytics Techniques

Advanced analytics techniques help uncover hidden patterns, unknown correlations and meaningful insights from big data across domains:

| Data Mining | Data mining employs computational methods to discover interesting and useful patterns from large sets of data involving methods like classification, clustering, regression, prediction, and more. |

| Machine Learning | Machine learning uses statistical algorithms that learn and improve over time to make predictions from data without explicit programming. Supervised, unsupervised, deep learning techniques are leveraged. |

| Natural Language Processing | NLP enables computers to process, analyze and derive insights from unstructured text data through semantic analysis to understand human languages. |

| Network Analysis | Analyzing relationships and flows between people, organizations, computers, URLs, social networks surfaces patterns, trends, and key nodes. |

| Data Visualization | Creative visual representations like charts, graphs, and dashboards help humans grasp insights, trends, and anomalies in massive datasets. |